Home »

Python »

Python Programs

How to read a large CSV file with pandas?

Given a large CSV file, we have to read it with Pandas.

By Pranit Sharma Last updated : September 20, 2023

What are CSV files?

CSV files or Comma Separated Values files are plain text files but the format of CSV files is tabular. As the name suggests, in a CSV file, each specific value inside the CSV file is generally separated with a comma. The first line identifies the name of a data column. The further subsequent lines identify the values in rows.

col_1_value, col_2_value , col_3_value

row1_value1 , row_1_value2 , row_1_value3

row1_value1 , row_1_value2 , row_1_value3

Here, the separator character (,) is called the delimiter. There are some more popular delimiters. E.g.: tab(\t), colon (:), semi-colon (;) etc.

Problem statement

Given a large CSV file, we have to read it with Pandas.

Reading a large CSV file with pandas

Sometimes, large CSV files can cause issues while loading in the primary memory of the device. The system may go down because of large CSV files which can be so huge that the memory is unable to fit them.

To overcome this problem, instead of reading the full CSV file, we read chunks of the file into memory.

We just need to pass chunksize='' inside the read_csv() method, with the help of this, the CSV file is read into chunks. The chunk size refers to the number of lines it read from the CSV file at once.

Let us understand with the help of an example,

Python program to read a large CSV file with pandas

# Importing pandas package

import pandas as pd

# Importing dataset

data = pd.read_csv('D:/test.csv', chunksize=500)

df = pd.concat(data)

# Print the dataset

print(df)

Output

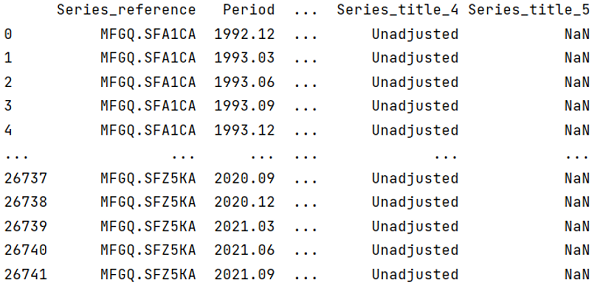

The output of the above program is:

Python Pandas Programs »

Advertisement

Advertisement