Home »

Big Data

Dimensions of Big Data - The Five V's of Big Data

Submitted by Uma Dasgupta, on October 09, 2018

Generally, only 5V's of big data are discussed. But here we will also talk about the sixth one and will also understand why we should not ignore the 6th one.

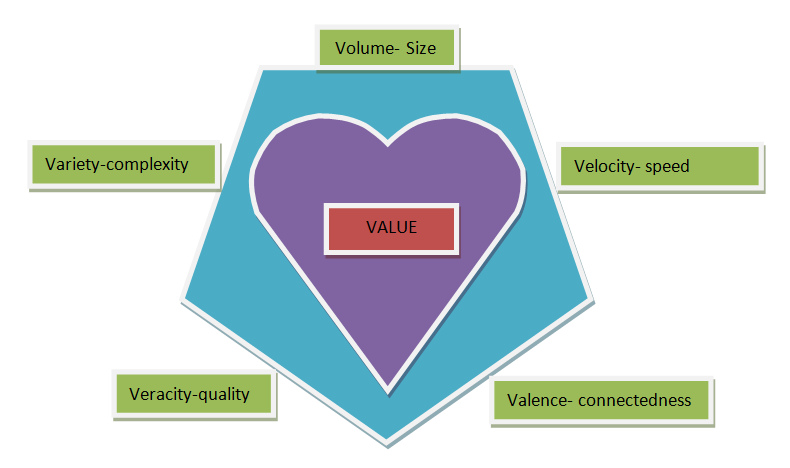

The Five V's of Big Data

The most commonly discussed 5 Big V's of Big Data are:

- Volume

- Variety

- Velocity

- Veracity

- Valence

And the sixth v that we will discuss here is Value.

Now let's discuss about all the v's in brief,

1. Volume

In the name itself of Big data the word big is mentioned, means the data big, it's voluminous.

Over millions of petabytes of data are produced per minute. We cannot even imagine of all the time, cost, energy that will be used to store and extract sense out of such an amount of data.

Also, there is the number of challenges we should encounter while dealing with the massive volume of big data. Specifically, the storing of data, the amount of storage space required to store that data efficiently will also be large. However, we also need to be able to retrieve that large amount of data fast enough and move them to processing units in a timely fashion to get results when we need them. This brings additional challenge such as networking, bandwidth, and cost of storing data.

2. Variety

Variety is a form of scalability. Here scale does not refer to the size it refers to increased diversity.

Just think over the internet, or in our daily lives also we came across different types of data. Text files, embedded images, videos etc.

So, variety is also another important thing we need to deal with cause data that produce is very varied in manner.

3. Velocity

By velocity we refer to the high speed at which the data is created and according to which data needs to be stored and analyzed. We should match our processing speed with the speed at which the data is produced because if a business cannot take advantage of the data as it gets generated, because of timing problem, they often miss opportunities. Velocity is an important factor of data, being able to catch up with the velocity of big data and analyzing it as it gets generated can even impact the quality of human life. For example, sensors and smart devices monitoring the human body can detect abnormalities in real time and trigger immediate action, potentially saving lives.

4. Veracity

By veracity, we refer to the quality of the data. Big data is varied, generated at a high speed so, it is likely to be noisy and uncertain. It can be full of biases, abnormalities and it can imprecise. "There is no value of data if it is not accurate".

5. Valence

Valence refers to the connectedness. The more connected data is the higher its valences. The term comes from chemistry, remember we talk about valence electrons in chemistry. Valence electrons are in outermost shells, have the highest energy level and are responsible for bonding with other atoms. Higher valence results in greater bonding, that is greater connectedness.

For a collection of data valence measures the ratio of actually connected data items to the possible number of connections that could occur within the collection.

The most important aspect of valence is that the data connectivity increases over time.

5 V's of Big Data as Dimensions of Big Data

Above we discussed the 5 v's of big data often referred to as the dimensions of big data. Each of them shows us the challenges associated with different dimensions of big data namely, size, complexity, speed, quality, and connectedness.

At the heart of the big data, the challenge is turning all of the other dimensions into truly useful business "value".

So, this value is our sixth v. The main purpose between collecting, storing, analyzing and all the other things we do is to extract "Value" from Big Data.

Conclusion

In the above article, we discussed all the dimensions of big data and also got introduced to the 6th v of big data i.e. value, which is the heart of all the other processes. For any further queries shoot your questions in the comment section below. Will see you in my next article till then stay healthy and keep learning!

Advertisement

Advertisement